Turtles

Stress-testing arguments with recursive logic

“Turtles” is my shorthand for infinite regress, or the rebuttal “it’s turtles all the way down.” Infinite regress is a kind of justification that relies on a never-ending chain of assertions. At first glance, the justification may appear sound, but continued examination reveals that the proposition does not point to any ground truth. For example, if the earth is carried on the back of a turtle, then what carries the turtle? Why, another turtle of course!

I adopted turtles as my colloquial shorthand long before encountering the formal term of infinite regress. This had the unfortunate consequence that I would sometimes end conversations abruptly, shouting furiously about spurious turtles. But I still think the colloquialism has utility, because it allows us to leverage the challenges of infinite regress more broadly, to critique any argument which starts to buckle under a number of recursive applications (even less than infinity).

The presence of turtles might not negate an argument outright, but it ought to compel us to consider the limitations of its explanations.

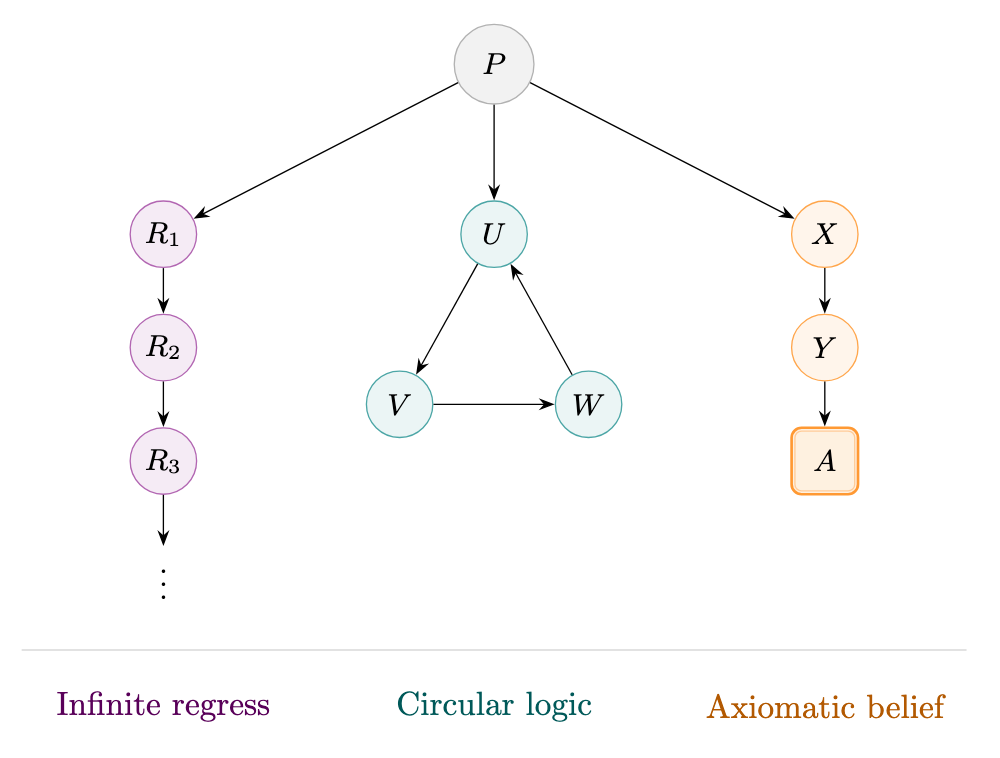

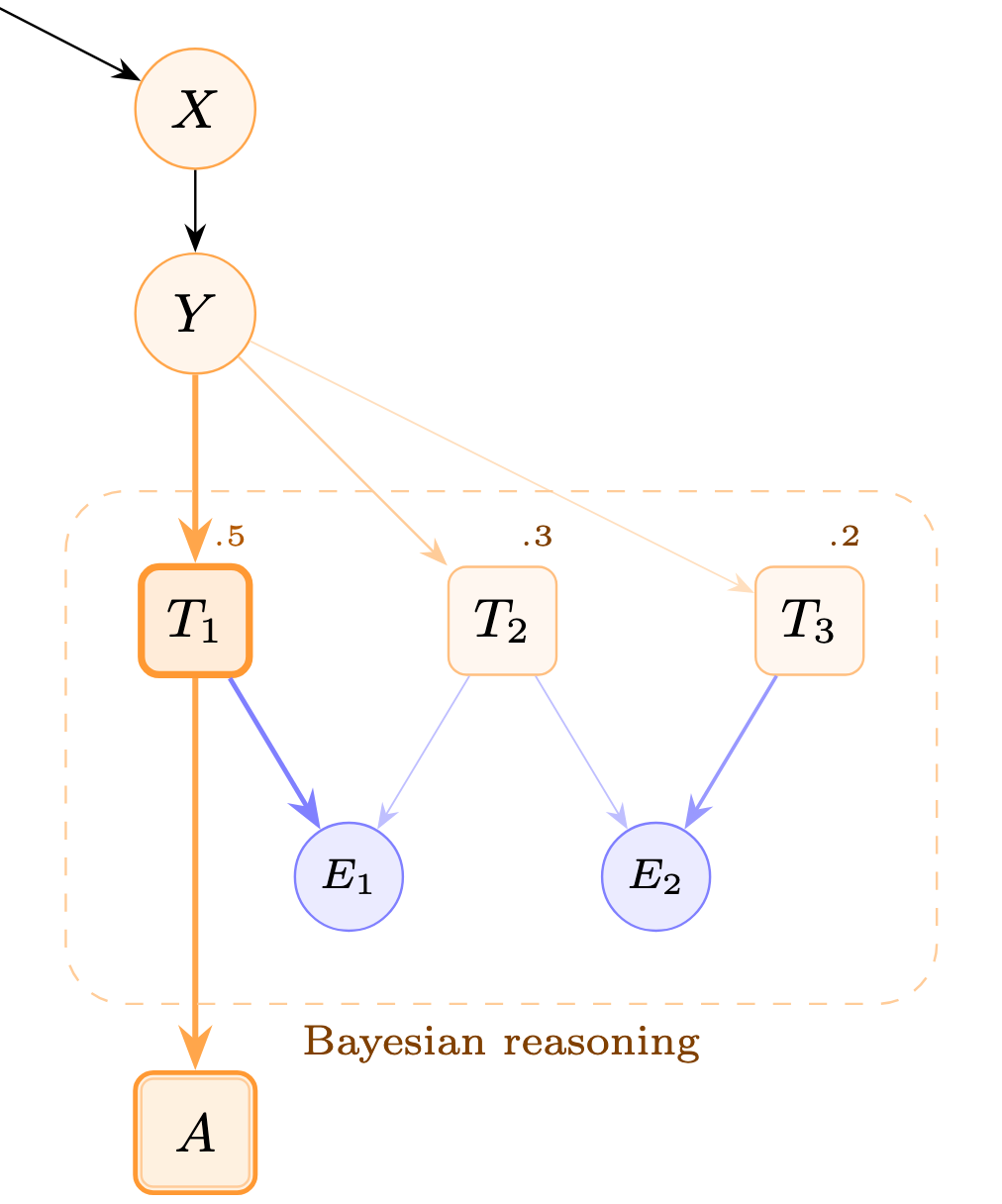

Inductive reasoning and proofs all eventually hit upon the limits of justification. The Münchhausen Trilemma denotes three problematic yet fundamental types of justification: infinite regress, circular logic and axiomatic belief (or dogma).

Consider a key proposition P, which we seek to justify with three separate arguments, R, U and X. We can model our arguments for P as a directed graph, with each node representing a distinct proposition, and each edge representing an epistemic justification. Though each node may try to provide final justification, further inquiry can always advance us on the graph.

When forming an argument we normally want to avoid infinite regress and circular logic, and strive to ensure our axiomatic beliefs are few and foundational.1

Consider then how we might look for turtles in a current popular idea like simulation theory.

Simulation theory supposes that our world is extremely likely to be computer simulated. The reasoning is that any advanced alien civilization would develop simulation technology, given expected advances in computing, and that simulated worlds would then quickly outnumber real worlds. (And if we don’t live in a simulated world, it’s only because it’s impossible to simulate a world, or because civilizations collapse before it’s achieved.)

Simulation theory causes me to grumble moodily about turtles.

Let’s accept the basic premise that it is extremely likely we are in a simulation. Accepting this premise should lead to the same conclusion that our civilization, or any alien civilizations in our simulation, will also seek to create a simulation. This chain of reasoning continues ad infinitum, until we suppose that there are an infinite number of simulated realities, all infinitely removed from a hypothetical base reality, running our infinite number of realities. This would require our base reality to have a computer with infinite energy, which would seem to violate thermodynamics.2

I would not say that my turtle critique is an outright refutation of simulation theory, but it does seem to imply some critical limitation on simulations which hobbles the argument. For example:

We could claim new fundamental physics in the base reality… but basing an argument on an appeal to fundamental physics is like appealing to magic.

We could claim that our simulation is unable to generate further simulations, or that the number of simulated worlds is capped… but this undermines the core hypothesis that simulated worlds will vastly outnumber real worlds. If we can never simulate worlds, why suppose that a hypothetical civilization could?

We could claim that only our own experience is simulated… but this reduces the argument to a contemporary solipsism.

(Notice the final defense of simulation theory is solipsism, i.e. the fundamental challenge necessitating axiomatic belief—we must choose to believe the external world exists! All claims about external reality at least require an axiomatic belief that external reality exists.)

So simulation theory at least exhibits major cracks when pressed for a recursive application of its own terms. I would anticipate there are more clever strategies to mitigate the damage.

When looking for turtles, we don’t need to search for an infinite recursion which breaks the entire argument. It might be sufficient to demonstrate that a theory gets increasingly “weird” under progressive application.

As mentioned in a previous blog post, I am quite interested in moral obligation. Though I am very sympathetic to the concept, I am nonetheless resistant to maximalist interpretations, i.e. the idea that we are obliged to do the most good possible. Mostly this is because the ideas of “good” and “values” are all tied up, in ways that traditional Christian morality obfuscates, and to strive for a strictly altruistic or “saintly” life is not universalizable.

Effective altruism in its various manifestations is the contemporary movement which comes closest to this altruistic injunction, even if contemporary practitioners might distance themselves from Peter Singer’s original, strong formulation of the idea (i.e., the idea that one should give up to the point where further giving would cause yourself severe suffering). Though I would not attack EA per se (as it seems to me a movement that has done a large amount of good), altruism can exhibit strange behavior under turtling, which may help set some reasonable limits on its application.

I’ll focus my critique on altruism directed at the reduction of suffering—which, if very good, does not tell us very much about what makes for a good life beyond “not suffering.”

Suppose that one has taken Peter Singer’s argument to heart and maximally devotes one’s life to the reduction of suffering. Consider further that one has then taken altruism as a telic activity, meaning we consider altruism itself as a good, rather than a means to an end (e.g., we are living to reduce suffering). Though there is certainly enough suffering in the world for one person to live entirely for altruism, the utility of altruistic activity will decrease for every additional altruistic individual. As more people become altruistic, does the altruist continue to define a good life around altruism? Should the process continue, up until everyone is an altruist, each seeking to minimize each other’s suffering?

Such an end point seems obviously absurd. I do not wish to strawman EA. I would not disagree that there ought to be some equilibrium between altruism and status quo behavior, which likely involves greater altruism than exhibited today.

But I do think this turtling of altruistic principles highlights an important tension between moral obligation and value creation. The utility of altruism is already much lower than it was 20, 50 or 200 years ago. In Bleak House, Dickens satirized his character Mrs. Jellyby for charity directed abroad, when so much impoverishment was visible around her. That critique had some weight in the 19th century, when domestic suffering could be quite severe, but makes less sense when extreme poverty has been nearly eliminated in the West. Yet the same progress is occurring abroad, as global poverty rates decline. The need for assistance persists, but not to the degree that we need maximal commitment to altruism.

As a counterpoint, keep in mind my critique is of altruism targeting a reduction of suffering. One easy tweak might be to shift from a reduction of suffering to an increase of “joy,” or whatever your chosen measure of a good life. Reduction of suffering is perhaps implicit in an increase of joy. I expect most effective altruists would endorse this goal, even if the discourse tends to center around reduction of suffering and other risks.

Focusing on joy also allows us to endorse atelic activities, which have no formal goal. An example might be spending time with family, or learning the violin, or writing a blog post. Atelic activities are highly resistant to turtles. We do them because they bring us joy, and we axiomatically believe that joy is good.3

Telic activities always have some susceptibility to turtling. If you derive value from climbing a corporate ladder—each rung the successful application of our value system—what happens when you reach the top? If you derive value from AI research, what happens when you’ve built ASI? At its simplest, turtling is nothing more than a technique to follow arguments and belief systems to their logical extremes. Use it!

Axiomatic beliefs are often left unaddressed, unless they are strong claims like the existence of god. For example, my belief in the existence of external reality is axiomatic, but I do not feel the need to anchor every essay with an assertion that external reality exists. There is gradation in belief upwards of axiomatic beliefs (for example, I believe in the scientific method, because I believe it has yielded results before and I believe it will again), and we do sometimes insert axiomatic beliefs in lieu of rational arguments (for example, I have a near-axiomatic belief in moral obligation, even if my thinking here remains poorly defined).

Note that the frontier of knowledge is usually expanded by adding justifications (resolving uncertainties), not by replacing axioms. For example:

Even if we assumed our simulation was “lower resolution,” positing infinitely many worlds still requires an infinitely high resolution in the base reality, or non-trivial claims about the feasibility of e.g. halving resolution at each step (forming a geometric series).

I employ “joy” rather than “happiness,” as I would like to invoke some stand-in for a measure of “the good life.”