AI Fatalism

If superintelligence carries existential risk for humankind, then we must approach it cautiously

Gentlemen! What use are empty arguments? You want proof; I propose to experiment on myself whether a man can, of his own will, arrange his own life, or whether each of us has been appointed our fateful moment in advance…

— Lermontov, A Hero of Our Time1

Dwarkesh Patel

And why is [Grok] gonna then care about human consciousness?Elon Musk

These things are only probabilities, they’re not certainties. So I’m not saying that for sure Grok will do everything, but at least if you try, it’s better than not trying. At least if that’s fundamental to the mission, it’s better than if it’s not fundamental to the mission.— Dwarkesh Patel and Elon Musk, Dwarkesh Podcast

In the final section of Lermontov’s 1840 novel A Hero of Our Time, “The Fatalist,” an officer in the Russian army points a pistol to his head in a test of fate. The officer, Vulich, has pulled the gun from a cabin wall, so we do not know if it is loaded. He calls for bets. If he survives, then his death was not predestined for this evening. Another officer, Pechorin, calls his bet, wagering against predestination and on his death.

When Vulich pulls the trigger, nothing happens. Yet the gun was loaded. Pointing the gun at an old cap, the pistol finally fires. Surviving thanks to a malfunction, Vulich has made his proof of fate.

In 2023, OpenAI established the superalignment team, meant to ensure artificial superintelligence, or ASI, would remain under human control. For the purposes of this article, we can take ASI to be a strict superset of artificial general intelligence, or AGI, where AI intelligence exceeds that of the most gifted humans. In 2024, the superalignment team was disbanded, as multiple leaders left the organization. In reference to his departure, Jan Leike, now alignment team lead at Anthropic, wrote “safety culture and processes have taken a backseat to shiny products.”

In the quote above with Dwarkesh Patel, Elon Musk asserts that we can only make probabilistic assumptions as to whether ASI will allow human life to continue. He later downplays the importance of AI risk, stating a far more important measure is the total intelligence or consciousness2 in the universe.3

Humanity is incidental, so long as intelligence expands.

After Vulich survives his game of Russian roulette, Pechorin is shocked into a sudden belief in fate. Walking alone afterwards, he grows melancholic. He stares at the stars above, envious of the Homeric heroes4 of old, who believed that the heavens themselves participated in our struggles. He recognizes that modern man has no such luxury of belief.

Pechorin is distraught. Convinced all outcomes are predestined, he fails to find purpose in life.

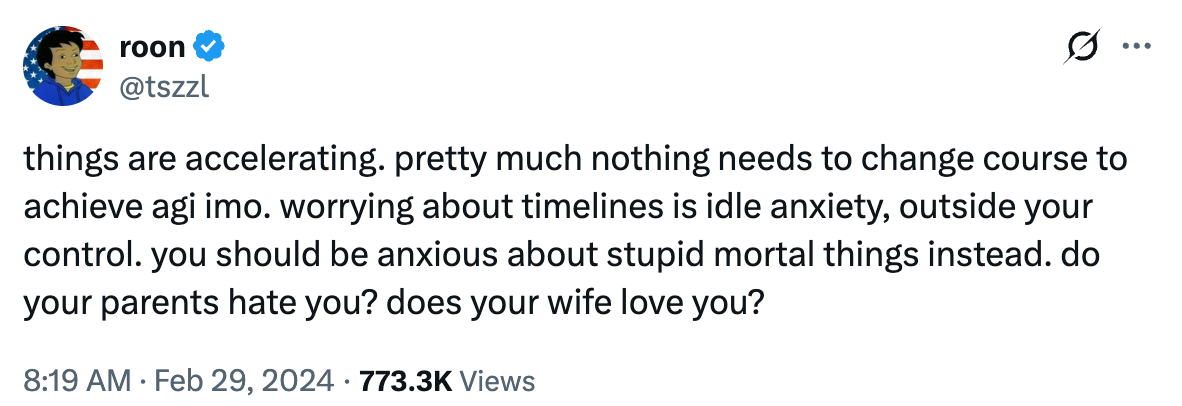

Roon is the X account for a member of OpenAI’s technical team, known for internet humor and a persistent defense of the industry’s pursuit of AGI.

In 2024 he made a famous defense of the inevitability of AGI, asking us to instead focus on mortal concerns, like family. He has repeated similar claims many times since.

As quickly as he slipped into his reverie, Pechorin is reminded of the danger in dwelling on abstractions. He realizes he has taken Vulich’s supposed proof of fate with the same credulity as his forebears. Rather than focus on “metaphysical” problems, he decides to focus on reality, to look at what is “under his own feet.”

Over the past two decades, statistics has entered mainstream discourse as a rigorous means to dissecting our future. We can now use prediction markets to understand the likelihood of every outcome. We do not see fate exactly as Lermontov imagined it, but we do have probabilities, which risk being treated as predestination by a different name.

Forecasts assert likelihood based on a present reading of events. As we take actions in the world, those probabilities shift. Statistics are a description of reality, but do not determine it. Events are determined by material actors, with individual responsibility for their decisions.

Consider elections, which, especially in a post-Nate Silver world, are anticipated through polls, surveys and predictive modeling. We can assess trends and behavior, can measure the impact of demography, all with the finesse of a science. But predictions are not a substitute for an election. Ultimately, an election is itself the realization of the public’s will. While we might do our best to forecast the result, we are all still individually accountable for our vote.

I worry that brainy, intellectual types—like one might encounter in an AI lab—are particularly prone to mistaking a model of reality for reality itself.

The frontier AI labs of Silicon Valley seem to have become trapped in their own dogged fatalism, convinced they are working toward a single inevitable conclusion. Some are motivated by transhumanist ideals. A few, like Musk, work with a nihilistic indifference toward the survival of mankind. Most point to the manifold benefits of AI, and accept this as proof enough that we must push their models further.

I do not myself doubt the benefits of artificial intelligence. I have written at length about Claude Code and am very familiar with developments in software engineering. I am aware that AI could be used for drug discovery, for climate change, for an automation of work that would allow us to live in leisure.

Though I am concerned about the destabilization that narrow AI (or pre-AGI technology) might cause, I have no doubt we might handle it with the same ingenuity we have handled past technological change. We fear job loss, but after the industrial revolution, we were able to rethink our entire model of the state, creating a welfare system and other safeguards for the unfortunate. Perhaps universal basic income or sovereign wealth funds will serve as equivalents in the age of AI. We fear military use of AI, but the fear of abuse did not stop us from harnessing nuclear power, or carefully deploying nuclear weapons. The risks are real, but I do not think the dangers of today’s AI are insurmountable.

What scares me is that I do not comprehend an equivalent approach for ASI. We cannot manage ASI risk with post-hoc policy, the way we do now with narrow AI, because ASI carries immediate existential risks, either through abuse or misalignment. If we do not have reliable control mechanisms for an ASI, some well-understood framework by which we provide humanity with a good-enough guarantee to our survival, then I do not see any way to safely advance AI until those mechanisms are understood.

Turning back to reality after his musings on fate, Pechorin is startled to learn that Vulich has been murdered by a drunken Cossack. Cornered into an old hut, wielding a pistol and saber, the drunkard now threatens to shoot anyone who approaches him. The hut is surrounded by soldiers, unsure of what to do. As they discuss whether it might be better to just kill him, Pechorin volunteers a plan to catch him alive. Rushing in through a window, Pechorin surprises the drunkard from the side. The drunkard’s shot whizzes past him, but Pechorin seizes him without injury.

Both Pechorin and Vulich risk their lives. Vulich gambles cheaply on his life for the sake of a “metaphysical” argument. Pechorin’s motives are less clear, but we know he risks his life for the sake of other people, sparing other soldiers from his dangerous mission, and sparing the murderer from being shot himself.

Vulich embodies the nihilistic response to fatalism, where the value of our own lives is extinguished by a prevailing sense of inevitability. Pechorin, meanwhile, demonstrates a humanist response. Rather than surrendering to fatalism, he reasserts his agency, in spite of whatever theoretical explanations for his behavior, and chooses to act for the good of humankind.

I am as unsure as anyone on how we avoid AI catastrophe, but I am sure it requires a refutation of fatalism.

PauseAI has a proposal for a general moratorium on the advancement of frontier intelligence. Perhaps the economic incentives are too high to stop now, but we could define intelligence or capability thresholds that we will not exceed until our theories of alignment and control catch up. If there is a safe way to build ASI, then a pause would only be temporary.

My views are not actually very different from what Anthropic themselves have publicly stated about AI risk, referring to a “pessimistic scenario” in which AI safety is unsolvable.

If we’re in a pessimistic scenario… Anthropic’s role will be to provide as much evidence as possible that AI safety techniques cannot prevent serious or catastrophic safety risks from advanced AI, and to sound the alarm so that the world’s institutions can channel collective effort towards preventing the development of dangerous AIs.

But Anthropic is not acting alone, and we have seen how existing incentives cause companies to deprioritize safety. What I would like to see is a legal codification of our responsibilities regarding the pessimistic scenario. Premature regulation could unnecessarily stifle innovation. But there is no excuse for stumbling into the worst-case scenarios unknowingly. We need guarantees that all American companies, and ultimately all nations, will act similarly if a pessimistic scenario is detected, and that we will do everything in our power to detect a pessimistic scenario in the first place.

Without such a coordinated approach to mitigate risk, we will make Vulich’s same fatalistic gamble, careening towards the unknown because we have forgotten the reasons not to. We must take the gun down from our heads. Technical possibilities do not dictate our actions. Coordination is harder than engineering, but just as necessary. A safer approach is possible without sacrificing the full benefits of AI.

My own translation, from https://ilibrary.ru/text/12/p.7/index.html

Musk does not clearly distinguish between consciousness and intelligence in his interview. His intent is probably closer to intelligence, given his focus on spreading AI through the solar system, rather than, say, pigeons.

“In the long run, I think it’s difficult to imagine that if humans have, say 1%, of the combined intelligence of artificial intelligence, that humans will be in charge of AI. I think what we can do is make sure that AI has values that cause intelligence to be propagated into the universe.

xAI’s mission is to understand the universe. Now that’s actually very important. What things are necessary to understand the universe? You have to be curious and you have to exist. You can’t understand the universe if you don’t exist. So you actually want to increase the amount of intelligence in the universe, increase the probable lifespan of intelligence, the scope and scale of intelligence.

I think as a corollary, you have humanity also continuing to expand because if you’re curious about trying to understand the universe, one thing you try to understand is where will humanity go? I think understanding the universe means you would care about propagating humanity into the future. That’s why I think our mission statement is profoundly important. To the degree that Grok adheres to that mission statement, I think the future will be very good.” - Dwarkesh Podcast

There is no direct reference to Grecian mythology in the text. This is my own extrapolation.